跨主机网络方案包括:docker原生的 overlay 和 macvlan。

第三方方案:常用的包括flannel、weave 和 calico。

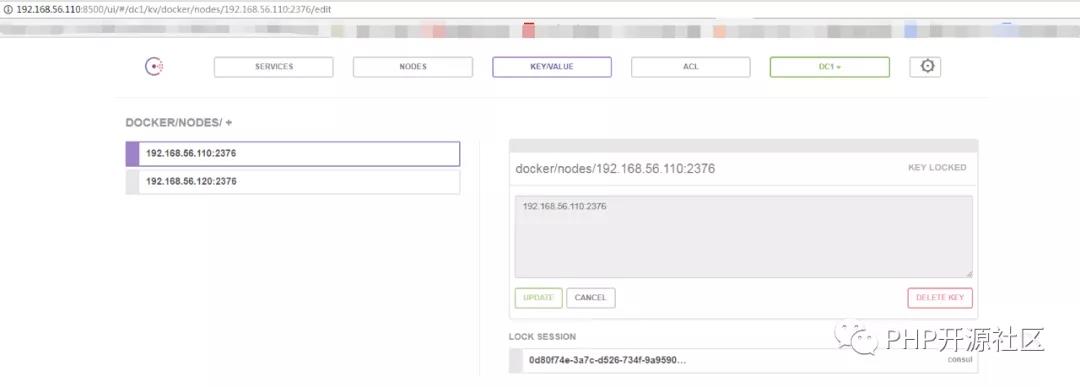

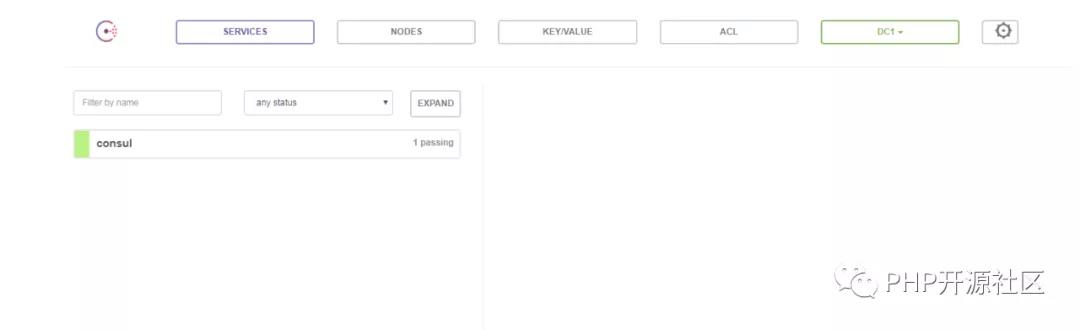

[root@linux-node1 ~]# docker run -d -p 8500:8500 -h consul --name consul progrium/consul -server -bootstrap [root@linux-node1 ~]# netstat -tulnp |grep 8500 tcp6 0 0 :::8500 :::* LISTEN 61092/docker-proxy- 容器启动后,可以通过 http://192.168.56.110:8500 访问 Consul。

接下来修改 node1 和 node2 的 docker daemon 的配置文件/var/lib/systemd/system/docker.service。--cluster-store 指定 consul 的地址。 --cluster-advertise 告知 consul 自己的连接地址。ExecStart=/usr/bin/dockerd-current \[root@linux-node1 ~]# cat /usr/lib/systemd/system/docker.service ......--exec-opt native.cgroupdriver=systemd \--add-runtime docker-runc=/usr/libexec/docker/docker-runc-current \ --default-runtime=docker-runc \--cluster-store=consul://192.168.56.110:8500 \--userland-proxy-path=/usr/libexec/docker/docker-proxy-current \ --seccomp-profile=/etc/docker/seccomp.json \ --cluster-advertise=eth0:2376 \ $OPTIONS \node1 和 node2 将自动注册到 Consul 数据库中。...... [root@linux-node1 ~]# systemctl daemon-reload[root@linux-node1 ~]# systemctl restart docker

(2)创建 overlay 网络

在 node1 中创建 overlay 网络 ov_net1:

[root@linux-node1 ~]# docker network create -d overlay ov_net1 #-d overlay 指定 driver 为 overaly。

[root@linux-node1 ~]# docker network ls #查看当前网络

NETWORK ID NAME DRIVER SCOPE

8eb7fd71a52c bridge bridge local

6ba20168e34f host host local

4e896f9ac4bc none null local

d9652d84d9de ov_net1 overlay global

[root@linux-node2 ~]# docker network ls #查看当前网络

NETWORK ID NAME DRIVER SCOPE

94a3bc259414 bridge bridge local

f8443f6cb8d2 host host local

2535ab8f3493 none null local

d9652d84d9de ov_net1 overlay global

node2 上也能看到 ov_net1。这是因为创建 ov_net1 时 node1 将 overlay 网络信息存入了 consul,node2 从 consul 读取到了新网络的数据。之后 ov_net 的任何变化都会同步到 node1 和 node2。

[root@linux-node1 ~]# docker network inspect ov_net1 #查看 ov_net1 的详细信息

[

{

"Name": "ov_net1",

"Id": "d9652d84d9de6d1145c77d0254c90164b968f72f2eda4aee43d56ab03f8530ed",

"Created": "2018-04-19T21:50:29.128801226+08:00",

"Scope": "global",

"Driver": "overlay",

"EnableIPv6": false,

"IPAM": {

"Driver": "default",

"Options": {},

"Config": [

{

"Subnet": "10.0.0.0/24",

"Gateway": "10.0.0.1"

}

]

},

"Internal": false,

"Attachable": false,

"Containers": {},

"Options": {},

"Labels": {}

}

]

(3) overlay 中运行容器

[root@linux-node1 ~]# docker run -itd --name bbox1 --network ov_net1 busybox

340f748b06786c0f81c3e26dd9dbd820dafcdf73baa9232f02aece8d4c89a73b

[root@linux-node1 ~]# docker exec bbox1 ip r #查看容器的网络配置

default via 172.18.0.1 dev eth1

10.0.0.0/24 dev eth0 scope link src 10.0.0.2

172.18.0.0/16 dev eth1 scope link src 172.18.0.2

bbox1有两个网络接口eth0 和 eth1。eth0 IP为10.0.0.2,连接的是 overlay 网络ov_net1。eth1 IP 172.18.0.2,容器的默认路由是走 eth1,eth1是哪儿来的呢?

其实,docker会创建一个bridge网络 “docker_gwbridge”,为所有连接到 overlay 网络的容器提供访问外网的能力。

[root@linux-node1 ~]# docker network ls

NETWORK ID NAME DRIVER SCOPE

8eb7fd71a52c bridge bridge local

751bd423a345 docker_gwbridge bridge local

6ba20168e34f host host local

4e896f9ac4bc none null local

d9652d84d9de ov_net1 overlay global

[root@linux-node1 ~]# docker network inspect docker_gwbridge

[

{

"Name": "docker_gwbridge",

"Id": "751bd423a345a7beaa6b4cbf2a69a7687e3d8b7e656952090c4b94aec54ec1b5",

"Created": "2018-04-21T16:11:57.684140362+08:00",

"Scope": "local",

"Driver": "bridge",

"EnableIPv6": false,

"IPAM": {

"Driver": "default",

"Options": null,

"Config": [

{

"Subnet": "172.18.0.0/16",

"Gateway": "172.18.0.1"

}

]

},

"Internal": false,

"Attachable": false,

"Containers": {

"340f748b06786c0f81c3e26dd9dbd820dafcdf73baa9232f02aece8d4c89a73b": {

"Name": "gateway_340f748b0678",

"EndpointID": "64cd599aaa2408ca0a1e595264e727b09d26482ba4d2aa18d97862ed29e23b51",

"MacAddress": "02:42:ac:12:00:02",

"IPv4Address": "172.18.0.2/16",

"IPv6Address": ""

}

},

"Options": {

"com.docker.network.bridge.enable_icc": "false",

"com.docker.network.bridge.enable_ip_masquerade": "true",

"com.docker.network.bridge.name": "docker_gwbridge"

},

"Labels": {}

}

]

从docker network inspect docker_gwbridge输出可确认 docker_gwbridge的 IP 地址范围是 172.18.0.0/16,当前连接的容器就是bbox1(172.18.0.2)。

而且此网络的网关就是网桥 docker_gwbridge 的IP 172.17.0.1。

[root@linux-node1 ~]# ifconfig docker_gwbridge

docker_gwbridge: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 172.18.0.1 netmask 255.255.0.0 broadcast 0.0.0.0

inet6 fe80::42:e4ff:feb8:22cb prefixlen 64 scopeid 0x20<link>

ether 02:42:e4:b8:22:cb txqueuelen 0 (Ethernet)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

这样容器 bbox1 就可以通过 docker_gwbridge 访问外网。

[root@linux-node1 ~]# docker exec bbox1 ping -c 2 www.baidu.com

PING www.baidu.com (58.217.200.112): 56 data bytes

64 bytes from 58.217.200.112: seq=0 ttl=127 time=32.465 ms

64 bytes from 58.217.200.112: seq=1 ttl=127 time=32.754 ms

--- www.baidu.com ping statistics ---

2 packets transmitted, 2 packets received, 0% packet loss

round-trip min/avg/max = 32.465/32.609/32.754 ms

(4)overlay 如何实现跨主机通信?

[root@linux-node2 ~]# docker run -itd --name bbox2 --network ov_net1 busybox

[root@linux-node2 ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

68c81b90fb86 busybox "sh" 2 days ago Up 2 days bbox2

[root@linux-node2 ~]# docker exec bbox2 ip r

default via 172.18.0.1 dev eth1

10.0.0.0/24 dev eth0 scope link src 10.0.0.3

172.18.0.0/16 dev eth1 scope link src 172.18.0.2

##bbox2 IP 为 10.0.0.3,可以直接 ping bbox1

[root@linux-node2 ~]# docker exec bbox2 ping -c 3 bbox1

PING bbox1 (10.0.0.2): 56 data bytes

64 bytes from 10.0.0.2: seq=0 ttl=64 time=154.064 ms

64 bytes from 10.0.0.2: seq=1 ttl=64 time=0.789 ms

64 bytes from 10.0.0.2: seq=2 ttl=64 time=0.539 ms

--- bbox1 ping statistics ---

3 packets transmitted, 3 packets received, 0% packet loss

round-trip min/avg/max = 0.539/51.797/154.064 ms

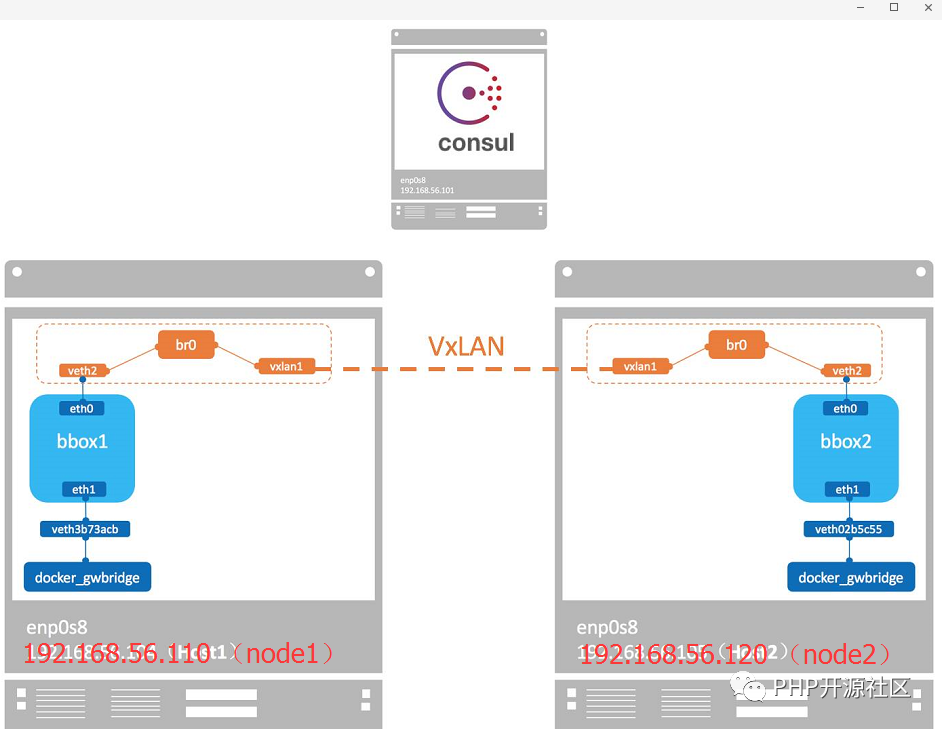

docker会为每个overlay网络创建一个独立的network namespace,其中会有一个linux bridge br0,endpoint 还是由veth pair 实现,一端连接到容器中(即 eth0),另一端连接到 namespace的br0上。

br0除了连接所有的 endpoint,还会连接一个 vxlan 设备,用于与其他 host建立 vxlan tunnel。容器之间的数据就是通过这个tunnel通信的。逻辑网络拓扑结构如图所示:

(5)overlay 是如何隔离的?

不同的overlay网络是相互隔离的。我们创建第二个 overlay网络ov_net2 并运行容器bbox3

[root@linux-node1 ~]# docker run -itd --name bbox3 --network ov_net2 busybox

946def609a7b183f68b8398b35fd3f72dc28bff47cc2ba63467f266fde297d5a

[root@linux-node1 ~]# docker exec -it bbox3 ip r

default via 172.18.0.1 dev eth1

10.0.1.0/24 dev eth0 scope link src 10.0.1.2 ##bbox3的ip为10.0.1.2

172.18.0.0/16 dev eth1 scope link src 172.18.0.4

[root@linux-node1 ~]# docker exec -it bbox3 ping -c 2 10.0.0.3 #bbox3无法ping通bbox2

PING 10.0.0.3 (10.0.0.3): 56 data bytes

^C

--- 10.0.0.3 ping statistics ---

2 packets transmitted, 0 packets received, 100% packet loss

如果要实现 bbox3 与 bbox2 通信,可以将 bbox3 也连接到 ov_net1。

[root@linux-node1 ~]# docker network connect ov_net1 bbox3

[root@linux-node1 ~]# docker exec -it bbox3 ping -c 2 10.0.0.3

PING 10.0.0.3 (10.0.0.3): 56 data bytes

64 bytes from 10.0.0.3: seq=0 ttl=64 time=34.110 ms

64 bytes from 10.0.0.3: seq=1 ttl=64 time=0.745 ms

--- 10.0.0.3 ping statistics ---

2 packets transmitted, 2 packets received, 0% packet loss

round-trip min/avg/max = 0.745/17.427/34.110 ms

docker默认为 overlay网络分配 24 位掩码的子网(10.0.X.0/24),所有主机共享这个 subnet,容器启动时会顺序从此空间分配 IP。当然我们也可以通过--subnet 指定 IP 空间。

[root@linux-node1 ~]# docker network create -d overlay --subnet 10.22.1.0/24 ov_net3a111191fa67e500015a2f3ab8166793d23f0adef4d66bfcee81166127915ff9f

[root@linux-node1 ~]# docker network ls

NETWORK ID NAME DRIVER SCOPE

8eb7fd71a52c bridge bridge local

751bd423a345 docker_gwbridge bridge local

6ba20168e34f host host local

4e896f9ac4bc none null local

d9652d84d9de ov_net1 overlay global

667cc7ef7427 ov_net2 overlay global

a111191fa67e ov_net3 overlay global

扫码二维码 获取免费视频学习资料

- 本文固定链接: http://www.phpxs.com/post/7497/

- 转载请注明:转载必须在正文中标注并保留原文链接

- 扫码: 扫上方二维码获取免费视频资料